license: cc-by-nc-4.0

tags:

- moe

- merge

- mergekit

base_model:

- mlabonne/AlphaMonarch-7B

- beowolx/CodeNinja-1.0-OpenChat-7B

- SanjiWatsuki/Kunoichi-DPO-v2-7B

- mlabonne/NeuralDaredevil-7B

model-index:

- name: Beyonder-4x7B-random-lora

results:

- task:

type: text-generation

name: Text Generation

dataset:

name: AI2 Reasoning Challenge (25-Shot)

type: ai2_arc

config: ARC-Challenge

split: test

args:

num_few_shot: 25

metrics:

- type: acc_norm

value: 71.25

name: normalized accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Aratako/Beyonder-4x7B-random-lora

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: HellaSwag (10-Shot)

type: hellaswag

split: validation

args:

num_few_shot: 10

metrics:

- type: acc_norm

value: 87.4

name: normalized accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Aratako/Beyonder-4x7B-random-lora

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: MMLU (5-Shot)

type: cais/mmlu

config: all

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 64.78

name: accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Aratako/Beyonder-4x7B-random-lora

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: TruthfulQA (0-shot)

type: truthful_qa

config: multiple_choice

split: validation

args:

num_few_shot: 0

metrics:

- type: mc2

value: 70.49

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Aratako/Beyonder-4x7B-random-lora

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: Winogrande (5-shot)

type: winogrande

config: winogrande_xl

split: validation

args:

num_few_shot: 5

metrics:

- type: acc

value: 82.16

name: accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Aratako/Beyonder-4x7B-random-lora

name: Open LLM Leaderboard

- task:

type: text-generation

name: Text Generation

dataset:

name: GSM8k (5-shot)

type: gsm8k

config: main

split: test

args:

num_few_shot: 5

metrics:

- type: acc

value: 67.4

name: accuracy

source:

url: >-

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard?query=Aratako/Beyonder-4x7B-random-lora

name: Open LLM Leaderboard

Beyonder-4x7B-v3-random-lora

The idea was very simple. If heuristic methods for determining gate parameters in mergekit-based MoE models can work well, then perhaps we could obtain a better performing model by fine-tuning only the gate parameters.

This model is an attempt at testing that idea. Unfortunately, the performance degraded slightly, but I am sharing it as an experimental result.

Model Details

First, I created an MoE model using mergekit with gate_mode=random and the following four models (same as mlabonne/Beyonder-4x7B-v3):

- mlabonne/AlphaMonarch-7B

- beowolx/CodeNinja-1.0-OpenChat-7B

- SanjiWatsuki/Kunoichi-DPO-v2-7B

- mlabonne/NeuralDaredevil-7

Then, I used LoRA to fine-tune only the gate parameters by specifying "gate" in target_modules. The data used for fine-tuning is as follows. I used the Mistral prompt format.

- 5000 random samples from llm-jp/oasst1-21k-en

- 5000 random samples from databricks/databricks-dolly-15k

- 5000 random samples from hieunguyenminh/roleplay

- 5000 random samples from meta-math/MetaMathQA

- 5000 random samples from m-a-p/CodeFeedback-Filtered-Instruction

The training was conducted on runpod using 4xA6000 GPUs. The main training parameters are as follows:

- lora_r: 128

- lora_alpha: 256

- lora_dropout: 0.05

- lora_target_modules: "gate"

- learning_rate: 3e-4

- num_train_epochs: 5

- batch_size: 64

- max_seq_length: 2048

Evaluation

The evaluation results show a slight degradation in performance. Apart from the possibility that this approach may not be effective, other potential causes could be issues with the dataset, training parameters, training setup (such as prompt formatting), and so on.

Nous (LLM AutoEval)

| Model | Average | AGIEval | GPT4All | TruthfulQA | Bigbench |

|---|---|---|---|---|---|

| mlabonne/AlphaMonarch-7B 📄 | 62.74 | 45.37 | 77.01 | 78.39 | 50.2 |

| mlabonne/Beyonder-4x7B-v3 📄 | 61.91 | 45.85 | 76.67 | 74.98 | 50.12 |

| Aratako/Beyonder-4x7B-v3-random-lora 📄 | 60.29 | 45.82 | 76.69 | 69.94 | 48.72 |

| mlabonne/NeuralDaredevil-7B 📄 | 59.39 | 45.23 | 76.2 | 67.61 | 48.52 |

| SanjiWatsuki/Kunoichi-DPO-v2-7B 📄 | 58.29 | 44.79 | 75.05 | 65.68 | 47.65 |

| mlabonne/Beyonder-4x7B-v2 📄 | 57.13 | 45.29 | 75.95 | 60.86 | 46.4 |

| beowolx/CodeNinja-1.0-OpenChat-7B 📄 | 50.35 | 39.98 | 71.77 | 48.73 | 40.92 |

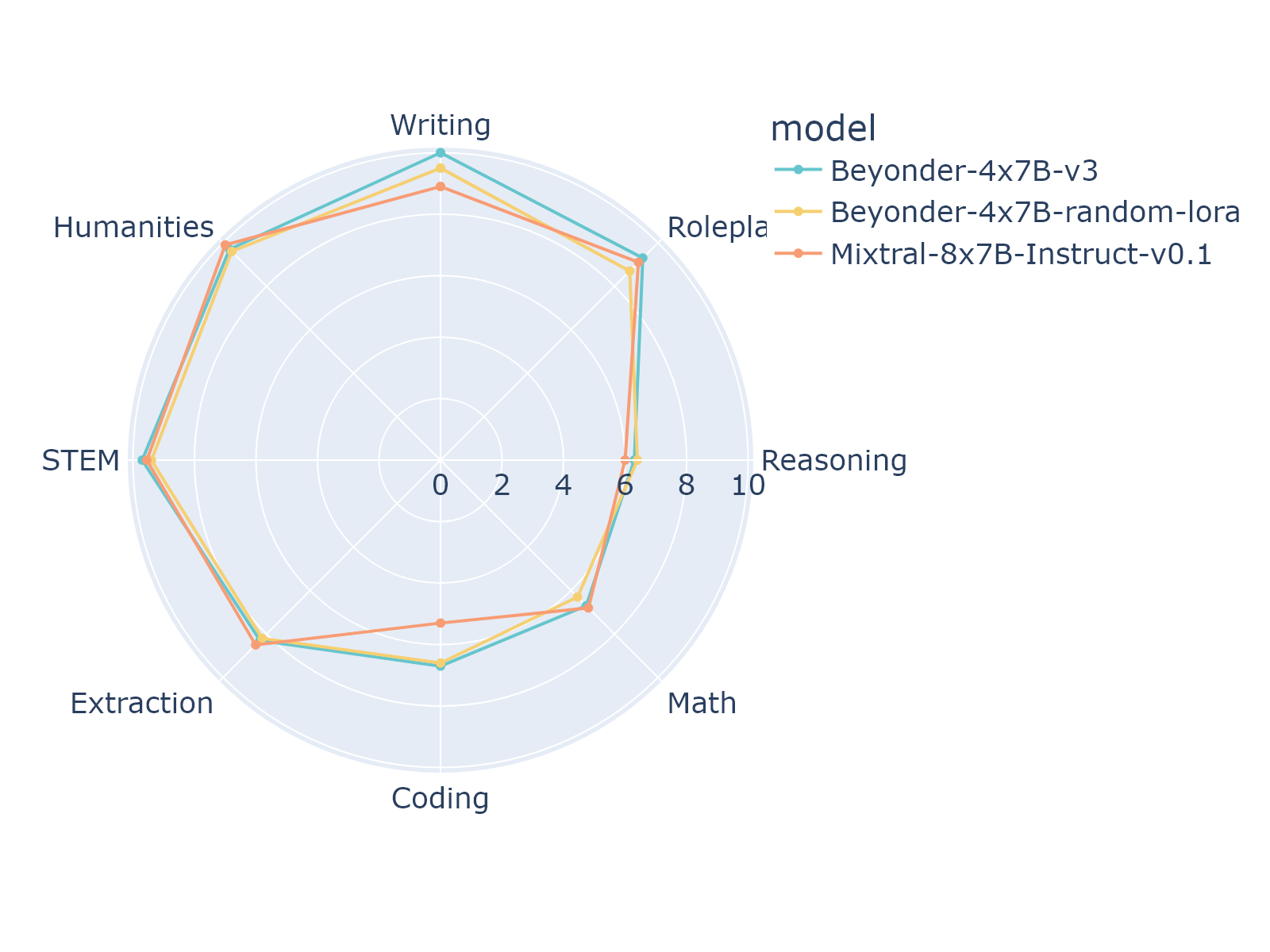

MT-Bench

1-turn

| Model | Coding | Extraction | Humanities | Math | Reasoning | Roleplay | STEM | Writing | avg_score |

|---|---|---|---|---|---|---|---|---|---|

| mlabonne/Beyonder-4x7B-v3 | 6.7 | 8.3 | 9.7 | 6.7 | 6.3 | 9.3 | 9.7 | 10.0 | 8.33750 |

| Aratako/Beyonder-4x7B-v3-random-lora | 6.6 | 8.2 | 9.6 | 6.3 | 6.4 | 8.7 | 9.4 | 9.5 | 8.08750 |

| mistralai/Mixtral-8x7B-Instruct-v0.1 | 5.3 | 8.5 | 9.9 | 6.8 | 6.0 | 9.1 | 9.55 | 8.9 | 8.00625 |

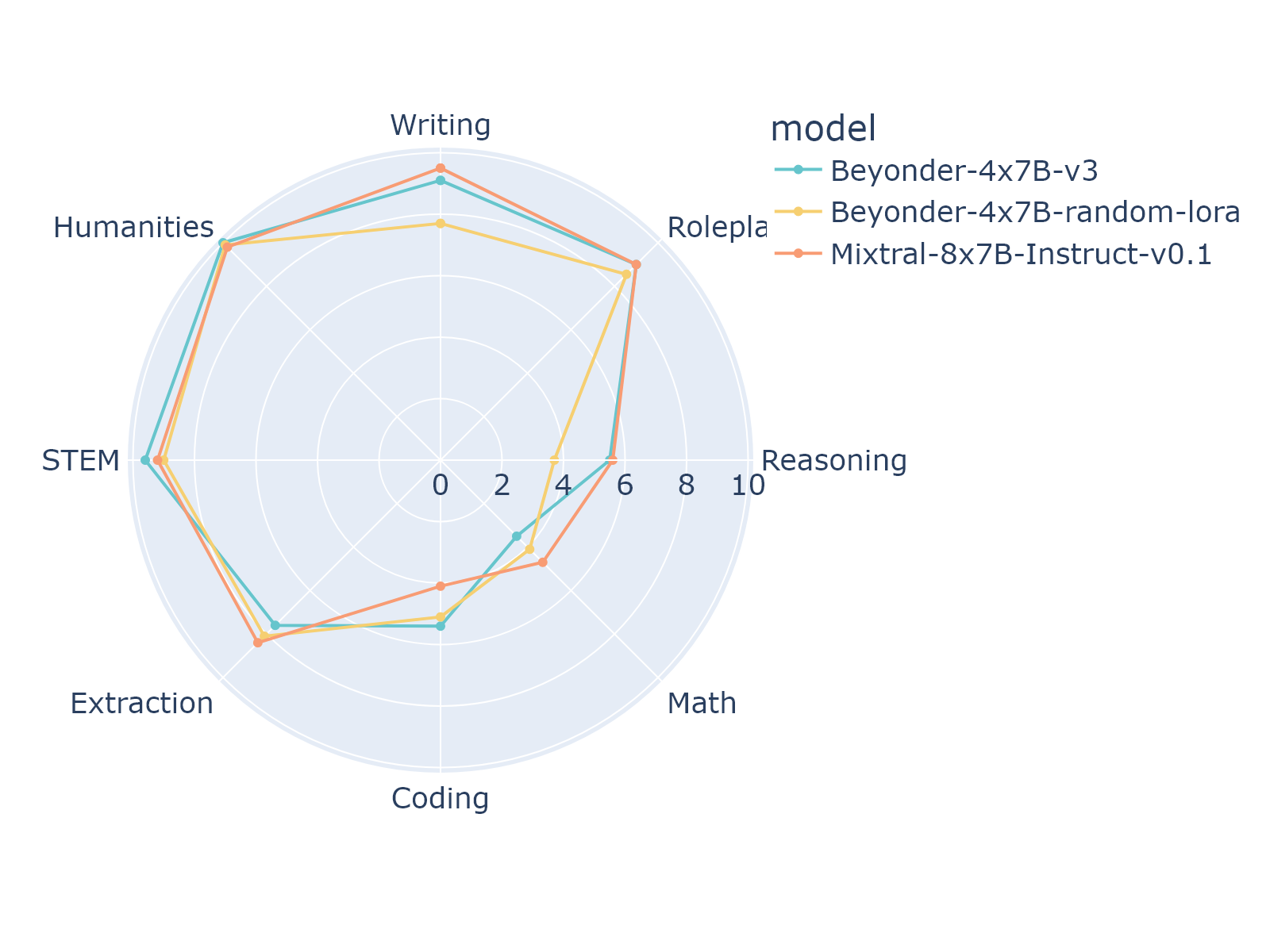

2-turn

| Model | Coding | Extraction | Humanities | Math | Reasoning | Roleplay | STEM | Writing | avg_score |

|---|---|---|---|---|---|---|---|---|---|

| mlabonne/Beyonder-4x7B-v3 | 5.4 | 7.6 | 10.0 | 3.5 | 5.5 | 9.0 | 9.6 | 9.1 | 7.46250 |

| Aratako/Beyonder-4x7B-v3-random-lora | 5.1 | 8.1 | 9.9 | 4.1 | 3.7 | 8.55 | 9.0 | 7.7 | 7.01875 |

| mistralai/Mixtral-8x7B-Instruct-v0.1 | 4.1 | 8.4 | 9.8 | 4.7 | 5.6 | 9.0 | 9.2 | 9.5 | 7.53750 |

Open LLM Leaderboard Evaluation Results

Detailed results can be found here

| Metric | Value |

|---|---|

| Avg. | 73.91 |

| AI2 Reasoning Challenge (25-Shot) | 71.25 |

| HellaSwag (10-Shot) | 87.40 |

| MMLU (5-Shot) | 64.78 |

| TruthfulQA (0-shot) | 70.49 |

| Winogrande (5-shot) | 82.16 |

| GSM8k (5-shot) | 67.40 |