metadata

language:

- en

- es

license: apache-2.0

library_name: transformers

tags:

- generated_from_trainer

model-index:

- name: Agente-Director-Qwen2-7Bron-Instruct_CODE_Python_English_GGUF_16bit

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: mrpc

split: validation

metrics:

- name: Accuracy

type: accuracy

value: 0.473

- name: F1

type: f1

value: 0.4917

Agente-Director-Qwen2-7Bron-Instruct_CODE_Python_English_GGUF_16bit

- Developed by: Agnuxo

- License: apache-2.0

- Finetuned from model: Qwen/Qwen2-7B-Instruct

This model was fine-tuned using Unsloth and Huggingface's TRL library.

Model Details

- Model Parameters: 7070.63 million

- Model Size: 13.61 GB

- Quantization: 16-bit quantized

- Estimated GPU Memory Required: ~13 GB

Note: The actual memory usage may vary depending on the specific hardware and runtime environment.

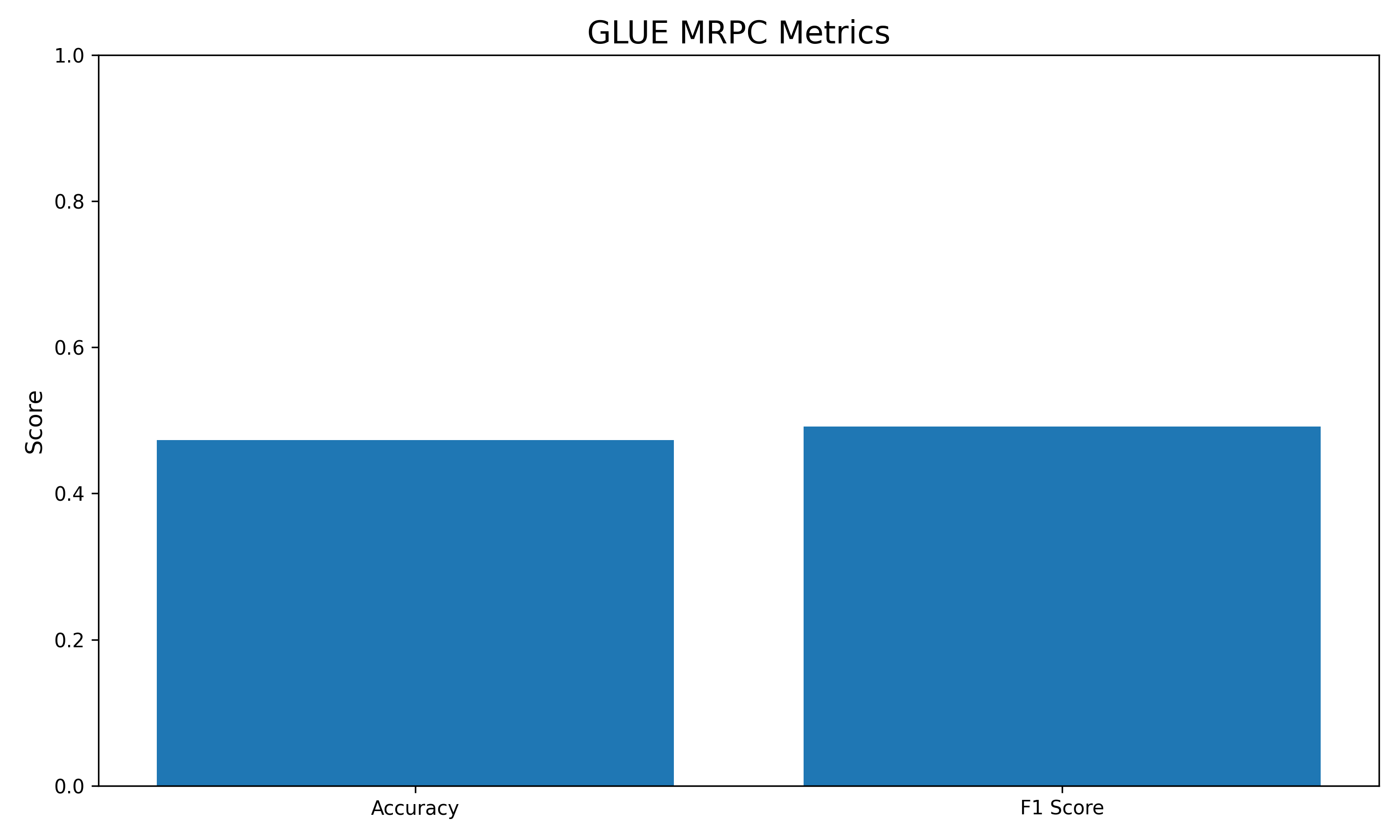

Benchmark Results

This model has been fine-tuned and evaluated on the GLUE MRPC task:

- Accuracy: 0.4730

- F1 Score: 0.4917

For more details, visit my GitHub.

Thanks for your interest in this model!